diff options

| author | Forrest L Norvell <forrest@npmjs.com> | 2016-11-04 01:59:48 +0300 |

|---|---|---|

| committer | Forrest L Norvell <forrest@npmjs.com> | 2016-11-04 05:18:08 +0300 |

| commit | 1d9159440364d2fe21e8bc15e08e284aaa118347 (patch) | |

| tree | c38faf71bbe97a58ec12323598d7e07e87004f8e /node_modules/request | |

| parent | e0023c089ded9161fbcbe544f12b07e12e3e5729 (diff) | |

request@2.78.0

Reviewed-By: @othiym23

Diffstat (limited to 'node_modules/request')

91 files changed, 1723 insertions, 7247 deletions

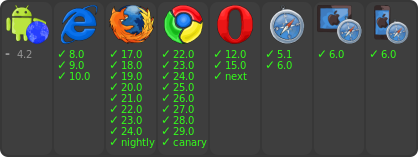

diff --git a/node_modules/request/.travis.yml b/node_modules/request/.travis.yml index 9be8247c7..643e6551b 100644 --- a/node_modules/request/.travis.yml +++ b/node_modules/request/.travis.yml @@ -5,7 +5,6 @@ node_js: - node - 6 - 4 - - 0.12 after_script: - npm run test-cov diff --git a/node_modules/request/CHANGELOG.md b/node_modules/request/CHANGELOG.md index 042c6e526..be7949cea 100644 --- a/node_modules/request/CHANGELOG.md +++ b/node_modules/request/CHANGELOG.md @@ -1,5 +1,25 @@ ## Change Log +### v2.78.0 (2016/11/03) +- [#2447](https://github.com/request/request/pull/2447) Always set request timeout on keep-alive connections (@mscdex) + +### v2.77.0 (2016/11/03) +- [#2439](https://github.com/request/request/pull/2439) Fix socket 'connect' listener handling (@mscdex) +- [#2442](https://github.com/request/request/pull/2442) 👻😱 Node.js 0.10 is unmaintained 😱👻 (@greenkeeperio-bot) +- [#2435](https://github.com/request/request/pull/2435) Add followOriginalHttpMethod to redirect to original HTTP method (@kirrg001) +- [#2414](https://github.com/request/request/pull/2414) Improve test-timeout reliability (@mscdex) + +### v2.76.0 (2016/10/25) +- [#2424](https://github.com/request/request/pull/2424) Handle buffers directly instead of using "bl" (@zertosh) +- [#2415](https://github.com/request/request/pull/2415) Re-enable timeout tests on Travis + other fixes (@mscdex) +- [#2431](https://github.com/request/request/pull/2431) Improve timeouts accuracy and node v6.8.0+ compatibility (@mscdex, @greenkeeperio-bot) +- [#2428](https://github.com/request/request/pull/2428) Update qs to version 6.3.0 🚀 (@greenkeeperio-bot) +- [#2420](https://github.com/request/request/pull/2420) change .on to .once, remove possible memory leaks (@duereg) +- [#2426](https://github.com/request/request/pull/2426) Remove "isFunction" helper in favor of "typeof" check (@zertosh) +- [#2425](https://github.com/request/request/pull/2425) Simplify "defer" helper creation (@zertosh) +- [#2402](https://github.com/request/request/pull/2402) form-data@2.1.1 breaks build 🚨 (@greenkeeperio-bot) +- [#2393](https://github.com/request/request/pull/2393) Update form-data to version 2.1.0 🚀 (@greenkeeperio-bot) + ### v2.75.0 (2016/09/17) - [#2381](https://github.com/request/request/pull/2381) Drop support for Node 0.10 (@simov) - [#2377](https://github.com/request/request/pull/2377) Update form-data to version 2.0.0 🚀 (@greenkeeperio-bot) @@ -493,15 +513,9 @@ - [#662](https://github.com/request/request/pull/662) option.tunnel to explicitly disable tunneling (@seanmonstar) - [#659](https://github.com/request/request/pull/659) fix failure when running with NODE_DEBUG=request, and a test for that (@jrgm) - [#630](https://github.com/request/request/pull/630) Send random cnonce for HTTP Digest requests (@wprl) - -### v2.27.0 (2013/08/15) - [#619](https://github.com/request/request/pull/619) decouple things a bit (@joaojeronimo) - -### v2.26.0 (2013/08/07) - [#613](https://github.com/request/request/pull/613) Fixes #583, moved initialization of self.uri.pathname (@lexander) - [#605](https://github.com/request/request/pull/605) Only include ":" + pass in Basic Auth if it's defined (fixes #602) (@bendrucker) - -### v2.25.0 (2013/07/23) - [#596](https://github.com/request/request/pull/596) Global agent is being used when pool is specified (@Cauldrath) - [#594](https://github.com/request/request/pull/594) Emit complete event when there is no callback (@RomainLK) - [#601](https://github.com/request/request/pull/601) Fixed a small typo (@michalstanko) @@ -574,7 +588,7 @@ - [#290](https://github.com/request/request/pull/290) A test for #289 (@isaacs) - [#280](https://github.com/request/request/pull/280) Like in node.js print options if NODE_DEBUG contains the word request (@Filirom1) - [#207](https://github.com/request/request/pull/207) Fix #206 Change HTTP/HTTPS agent when redirecting between protocols (@isaacs) -- [#214](https://github.com/request/request/pull/214) documenting additional behavior of json option (@jphaas) +- [#214](https://github.com/request/request/pull/214) documenting additional behavior of json option (@jphaas, @vpulim) - [#272](https://github.com/request/request/pull/272) Boundary begins with CRLF? (@elspoono, @timshadel, @naholyr, @nanodocumet, @TehShrike) - [#284](https://github.com/request/request/pull/284) Remove stray `console.log()` call in multipart generator. (@bcherry) - [#241](https://github.com/request/request/pull/241) Composability updates suggested by issue #239 (@polotek) @@ -592,10 +606,10 @@ - [#246](https://github.com/request/request/pull/246) Fixing the set-cookie header (@jeromegn) - [#243](https://github.com/request/request/pull/243) Dynamic boundary (@zephrax) - [#240](https://github.com/request/request/pull/240) don't error when null is passed for options (@polotek) -- [#211](https://github.com/request/request/pull/211) Replace all occurrences of special chars in RFC3986 (@chriso) +- [#211](https://github.com/request/request/pull/211) Replace all occurrences of special chars in RFC3986 (@chriso, @vpulim) - [#224](https://github.com/request/request/pull/224) Multipart content-type change (@janjongboom) - [#217](https://github.com/request/request/pull/217) need to use Authorization (titlecase) header with Tumblr OAuth (@visnup) -- [#203](https://github.com/request/request/pull/203) Fix cookie and redirect bugs and add auth support for HTTPS tunnel (@milewise) +- [#203](https://github.com/request/request/pull/203) Fix cookie and redirect bugs and add auth support for HTTPS tunnel (@vpulim) - [#199](https://github.com/request/request/pull/199) Tunnel (@isaacs) - [#198](https://github.com/request/request/pull/198) Bugfix on forever usage of util.inherits (@isaacs) - [#197](https://github.com/request/request/pull/197) Make ForeverAgent work with HTTPS (@isaacs) diff --git a/node_modules/request/README.md b/node_modules/request/README.md index 6eaaa0547..a0b6c84d0 100644 --- a/node_modules/request/README.md +++ b/node_modules/request/README.md @@ -762,6 +762,7 @@ The first argument can be either a `url` or an `options` object. The only requir - `followRedirect` - follow HTTP 3xx responses as redirects (default: `true`). This property can also be implemented as function which gets `response` object as a single argument and should return `true` if redirects should continue or `false` otherwise. - `followAllRedirects` - follow non-GET HTTP 3xx responses as redirects (default: `false`) +- `followOriginalHttpMethod` - by default we redirect to HTTP method GET. you can enable this property to redirect to the original HTTP method (default: `false`) - `maxRedirects` - the maximum number of redirects to follow (default: `10`) - `removeRefererHeader` - removes the referer header when a redirect happens (default: `false`). **Note:** if true, referer header set in the initial request is preserved during redirect chain. diff --git a/node_modules/request/index.js b/node_modules/request/index.js index 911a90dbb..9ec65ea26 100755 --- a/node_modules/request/index.js +++ b/node_modules/request/index.js @@ -18,8 +18,7 @@ var extend = require('extend') , cookies = require('./lib/cookies') , helpers = require('./lib/helpers') -var isFunction = helpers.isFunction - , paramsHaveRequestBody = helpers.paramsHaveRequestBody +var paramsHaveRequestBody = helpers.paramsHaveRequestBody // organize params for patch, post, put, head, del @@ -95,7 +94,7 @@ function wrapRequestMethod (method, options, requester, verb) { target.method = verb.toUpperCase() } - if (isFunction(requester)) { + if (typeof requester === 'function') { method = requester } diff --git a/node_modules/request/lib/helpers.js b/node_modules/request/lib/helpers.js index 356ff748e..f9d727e38 100644 --- a/node_modules/request/lib/helpers.js +++ b/node_modules/request/lib/helpers.js @@ -3,17 +3,9 @@ var jsonSafeStringify = require('json-stringify-safe') , crypto = require('crypto') -function deferMethod() { - if (typeof setImmediate === 'undefined') { - return process.nextTick - } - - return setImmediate -} - -function isFunction(value) { - return typeof value === 'function' -} +var defer = typeof setImmediate === 'undefined' + ? process.nextTick + : setImmediate function paramsHaveRequestBody(params) { return ( @@ -63,7 +55,6 @@ function version () { } } -exports.isFunction = isFunction exports.paramsHaveRequestBody = paramsHaveRequestBody exports.safeStringify = safeStringify exports.md5 = md5 @@ -71,4 +62,4 @@ exports.isReadStream = isReadStream exports.toBase64 = toBase64 exports.copy = copy exports.version = version -exports.defer = deferMethod() +exports.defer = defer diff --git a/node_modules/request/lib/redirect.js b/node_modules/request/lib/redirect.js index 040dfe0e0..f8604491f 100644 --- a/node_modules/request/lib/redirect.js +++ b/node_modules/request/lib/redirect.js @@ -8,6 +8,7 @@ function Redirect (request) { this.followRedirect = true this.followRedirects = true this.followAllRedirects = false + this.followOriginalHttpMethod = false this.allowRedirect = function () {return true} this.maxRedirects = 10 this.redirects = [] @@ -36,6 +37,9 @@ Redirect.prototype.onRequest = function (options) { if (options.removeRefererHeader !== undefined) { self.removeRefererHeader = options.removeRefererHeader } + if (options.followOriginalHttpMethod !== undefined) { + self.followOriginalHttpMethod = options.followOriginalHttpMethod + } } Redirect.prototype.redirectTo = function (response) { @@ -115,7 +119,7 @@ Redirect.prototype.onResponse = function (response) { ) if (self.followAllRedirects && request.method !== 'HEAD' && response.statusCode !== 401 && response.statusCode !== 307) { - request.method = 'GET' + request.method = self.followOriginalHttpMethod ? request.method : 'GET' } // request.method = 'GET' // Force all redirects to use GET || commented out fixes #215 delete request.src diff --git a/node_modules/request/node_modules/aws4/README.md b/node_modules/request/node_modules/aws4/README.md index 6c55da805..6b002d02f 100644 --- a/node_modules/request/node_modules/aws4/README.md +++ b/node_modules/request/node_modules/aws4/README.md @@ -434,6 +434,15 @@ request(aws4.sign({ /* (HTTP 202, empty response) */ + +// Generate CodeCommit Git access password +var signer = new aws4.RequestSigner({ + service: 'codecommit', + host: 'git-codecommit.us-east-1.amazonaws.com', + method: 'GIT', + path: '/v1/repos/MyAwesomeRepo', +}) +var password = signer.getDateTime() + 'Z' + signer.signature() ``` API diff --git a/node_modules/request/node_modules/aws4/aws4.js b/node_modules/request/node_modules/aws4/aws4.js index cbe5dc904..a54318065 100644 --- a/node_modules/request/node_modules/aws4/aws4.js +++ b/node_modules/request/node_modules/aws4/aws4.js @@ -52,6 +52,8 @@ function RequestSigner(request, credentials) { } if (!request.hostname && !request.host) request.hostname = headers.Host || headers.host + + this.isCodeCommitGit = this.service === 'codecommit' && request.method === 'GIT' } RequestSigner.prototype.matchHost = function(host) { @@ -109,7 +111,7 @@ RequestSigner.prototype.prepareRequest = function() { } else { - if (!request.doNotModifyHeaders) { + if (!request.doNotModifyHeaders && !this.isCodeCommitGit) { if (request.body && !headers['Content-Type'] && !headers['content-type']) headers['Content-Type'] = 'application/x-www-form-urlencoded; charset=utf-8' @@ -153,6 +155,9 @@ RequestSigner.prototype.getDateTime = function() { date = new Date(headers.Date || headers.date || new Date) this.datetime = date.toISOString().replace(/[:\-]|\.\d{3}/g, '') + + // Remove the trailing 'Z' on the timestamp string for CodeCommit git access + if (this.isCodeCommitGit) this.datetime = this.datetime.slice(0, -1) } return this.datetime } @@ -202,8 +207,8 @@ RequestSigner.prototype.canonicalString = function() { decodePath = this.service === 's3' || this.request.doNotEncodePath, decodeSlashesInPath = this.service === 's3', firstValOnly = this.service === 's3', - bodyHash = this.service === 's3' && this.request.signQuery ? - 'UNSIGNED-PAYLOAD' : hash(this.request.body || '', 'hex') + bodyHash = this.service === 's3' && this.request.signQuery ? 'UNSIGNED-PAYLOAD' : + (this.isCodeCommitGit ? '' : hash(this.request.body || '', 'hex')) if (query) { queryStr = encodeRfc3986(querystring.stringify(Object.keys(query).sort().reduce(function(obj, key) { diff --git a/node_modules/request/node_modules/aws4/package.json b/node_modules/request/node_modules/aws4/package.json index 7151d7aae..4e7caf0d3 100644 --- a/node_modules/request/node_modules/aws4/package.json +++ b/node_modules/request/node_modules/aws4/package.json @@ -10,24 +10,23 @@ "spec": ">=1.2.1 <2.0.0", "type": "range" }, - "/Users/rebecca/code/npm/node_modules/request" + "/Users/ogd/Documents/projects/npm/npm/node_modules/request" ] ], "_from": "aws4@>=1.2.1 <2.0.0", - "_id": "aws4@1.4.1", + "_id": "aws4@1.5.0", "_inCache": true, - "_installable": true, "_location": "/request/aws4", - "_nodeVersion": "4.4.3", + "_nodeVersion": "4.5.0", "_npmOperationalInternal": { - "host": "packages-12-west.internal.npmjs.com", - "tmp": "tmp/aws4-1.4.1.tgz_1462643218465_0.6527479749638587" + "host": "packages-16-east.internal.npmjs.com", + "tmp": "tmp/aws4-1.5.0.tgz_1476226259635_0.2796843808609992" }, "_npmUser": { "name": "hichaelmart", "email": "michael.hart.au@gmail.com" }, - "_npmVersion": "2.15.4", + "_npmVersion": "2.15.11", "_phantomChildren": {}, "_requested": { "raw": "aws4@^1.2.1", @@ -41,11 +40,11 @@ "_requiredBy": [ "/request" ], - "_resolved": "https://registry.npmjs.org/aws4/-/aws4-1.4.1.tgz", - "_shasum": "fde7d5292466d230e5ee0f4e038d9dfaab08fc61", + "_resolved": "https://registry.npmjs.org/aws4/-/aws4-1.5.0.tgz", + "_shasum": "0a29ffb79c31c9e712eeb087e8e7a64b4a56d755", "_shrinkwrap": null, "_spec": "aws4@^1.2.1", - "_where": "/Users/rebecca/code/npm/node_modules/request", + "_where": "/Users/ogd/Documents/projects/npm/npm/node_modules/request", "author": { "name": "Michael Hart", "email": "michael.hart.au@gmail.com", @@ -62,10 +61,10 @@ }, "directories": {}, "dist": { - "shasum": "fde7d5292466d230e5ee0f4e038d9dfaab08fc61", - "tarball": "https://registry.npmjs.org/aws4/-/aws4-1.4.1.tgz" + "shasum": "0a29ffb79c31c9e712eeb087e8e7a64b4a56d755", + "tarball": "https://registry.npmjs.org/aws4/-/aws4-1.5.0.tgz" }, - "gitHead": "f126d3ff80be1ddde0fc6b50bb51a7f199547e81", + "gitHead": "ba136334ee08884c6042c8578a22e376233eef34", "homepage": "https://github.com/mhart/aws4#readme", "keywords": [ "amazon", @@ -137,5 +136,5 @@ "scripts": { "test": "mocha ./test/fast.js ./test/slow.js -b -t 100s -R list" }, - "version": "1.4.1" + "version": "1.5.0" } diff --git a/node_modules/request/node_modules/bl/.jshintrc b/node_modules/request/node_modules/bl/.jshintrc deleted file mode 100644 index c8ef3ca40..000000000 --- a/node_modules/request/node_modules/bl/.jshintrc +++ /dev/null @@ -1,59 +0,0 @@ -{ - "predef": [ ] - , "bitwise": false - , "camelcase": false - , "curly": false - , "eqeqeq": false - , "forin": false - , "immed": false - , "latedef": false - , "noarg": true - , "noempty": true - , "nonew": true - , "plusplus": false - , "quotmark": true - , "regexp": false - , "undef": true - , "unused": true - , "strict": false - , "trailing": true - , "maxlen": 120 - , "asi": true - , "boss": true - , "debug": true - , "eqnull": true - , "esnext": true - , "evil": true - , "expr": true - , "funcscope": false - , "globalstrict": false - , "iterator": false - , "lastsemic": true - , "laxbreak": true - , "laxcomma": true - , "loopfunc": true - , "multistr": false - , "onecase": false - , "proto": false - , "regexdash": false - , "scripturl": true - , "smarttabs": false - , "shadow": false - , "sub": true - , "supernew": false - , "validthis": true - , "browser": true - , "couch": false - , "devel": false - , "dojo": false - , "mootools": false - , "node": true - , "nonstandard": true - , "prototypejs": false - , "rhino": false - , "worker": true - , "wsh": false - , "nomen": false - , "onevar": false - , "passfail": false -}

\ No newline at end of file diff --git a/node_modules/request/node_modules/bl/.npmignore b/node_modules/request/node_modules/bl/.npmignore deleted file mode 100644 index 40b878db5..000000000 --- a/node_modules/request/node_modules/bl/.npmignore +++ /dev/null @@ -1 +0,0 @@ -node_modules/

\ No newline at end of file diff --git a/node_modules/request/node_modules/bl/.travis.yml b/node_modules/request/node_modules/bl/.travis.yml deleted file mode 100644 index 5cb0480b4..000000000 --- a/node_modules/request/node_modules/bl/.travis.yml +++ /dev/null @@ -1,13 +0,0 @@ -sudo: false -language: node_js -node_js: - - '0.10' - - '0.12' - - '4' - - '5' -branches: - only: - - master -notifications: - email: - - rod@vagg.org diff --git a/node_modules/request/node_modules/bl/LICENSE.md b/node_modules/request/node_modules/bl/LICENSE.md deleted file mode 100644 index ccb24797c..000000000 --- a/node_modules/request/node_modules/bl/LICENSE.md +++ /dev/null @@ -1,13 +0,0 @@ -The MIT License (MIT) -===================== - -Copyright (c) 2014 bl contributors ----------------------------------- - -*bl contributors listed at <https://github.com/rvagg/bl#contributors>* - -Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions: - -The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software. - -THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE. diff --git a/node_modules/request/node_modules/bl/README.md b/node_modules/request/node_modules/bl/README.md deleted file mode 100644 index f7044db26..000000000 --- a/node_modules/request/node_modules/bl/README.md +++ /dev/null @@ -1,200 +0,0 @@ -# bl *(BufferList)* - -[](https://travis-ci.org/rvagg/bl) - -**A Node.js Buffer list collector, reader and streamer thingy.** - -[](https://nodei.co/npm/bl/) -[](https://nodei.co/npm/bl/) - -**bl** is a storage object for collections of Node Buffers, exposing them with the main Buffer readable API. Also works as a duplex stream so you can collect buffers from a stream that emits them and emit buffers to a stream that consumes them! - -The original buffers are kept intact and copies are only done as necessary. Any reads that require the use of a single original buffer will return a slice of that buffer only (which references the same memory as the original buffer). Reads that span buffers perform concatenation as required and return the results transparently. - -```js -const BufferList = require('bl') - -var bl = new BufferList() -bl.append(new Buffer('abcd')) -bl.append(new Buffer('efg')) -bl.append('hi') // bl will also accept & convert Strings -bl.append(new Buffer('j')) -bl.append(new Buffer([ 0x3, 0x4 ])) - -console.log(bl.length) // 12 - -console.log(bl.slice(0, 10).toString('ascii')) // 'abcdefghij' -console.log(bl.slice(3, 10).toString('ascii')) // 'defghij' -console.log(bl.slice(3, 6).toString('ascii')) // 'def' -console.log(bl.slice(3, 8).toString('ascii')) // 'defgh' -console.log(bl.slice(5, 10).toString('ascii')) // 'fghij' - -// or just use toString! -console.log(bl.toString()) // 'abcdefghij\u0003\u0004' -console.log(bl.toString('ascii', 3, 8)) // 'defgh' -console.log(bl.toString('ascii', 5, 10)) // 'fghij' - -// other standard Buffer readables -console.log(bl.readUInt16BE(10)) // 0x0304 -console.log(bl.readUInt16LE(10)) // 0x0403 -``` - -Give it a callback in the constructor and use it just like **[concat-stream](https://github.com/maxogden/node-concat-stream)**: - -```js -const bl = require('bl') - , fs = require('fs') - -fs.createReadStream('README.md') - .pipe(bl(function (err, data) { // note 'new' isn't strictly required - // `data` is a complete Buffer object containing the full data - console.log(data.toString()) - })) -``` - -Note that when you use the *callback* method like this, the resulting `data` parameter is a concatenation of all `Buffer` objects in the list. If you want to avoid the overhead of this concatenation (in cases of extreme performance consciousness), then avoid the *callback* method and just listen to `'end'` instead, like a standard Stream. - -Or to fetch a URL using [hyperquest](https://github.com/substack/hyperquest) (should work with [request](http://github.com/mikeal/request) and even plain Node http too!): -```js -const hyperquest = require('hyperquest') - , bl = require('bl') - , url = 'https://raw.github.com/rvagg/bl/master/README.md' - -hyperquest(url).pipe(bl(function (err, data) { - console.log(data.toString()) -})) -``` - -Or, use it as a readable stream to recompose a list of Buffers to an output source: - -```js -const BufferList = require('bl') - , fs = require('fs') - -var bl = new BufferList() -bl.append(new Buffer('abcd')) -bl.append(new Buffer('efg')) -bl.append(new Buffer('hi')) -bl.append(new Buffer('j')) - -bl.pipe(fs.createWriteStream('gibberish.txt')) -``` - -## API - - * <a href="#ctor"><code><b>new BufferList([ callback ])</b></code></a> - * <a href="#length"><code>bl.<b>length</b></code></a> - * <a href="#append"><code>bl.<b>append(buffer)</b></code></a> - * <a href="#get"><code>bl.<b>get(index)</b></code></a> - * <a href="#slice"><code>bl.<b>slice([ start[, end ] ])</b></code></a> - * <a href="#copy"><code>bl.<b>copy(dest, [ destStart, [ srcStart [, srcEnd ] ] ])</b></code></a> - * <a href="#duplicate"><code>bl.<b>duplicate()</b></code></a> - * <a href="#consume"><code>bl.<b>consume(bytes)</b></code></a> - * <a href="#toString"><code>bl.<b>toString([encoding, [ start, [ end ]]])</b></code></a> - * <a href="#readXX"><code>bl.<b>readDoubleBE()</b></code>, <code>bl.<b>readDoubleLE()</b></code>, <code>bl.<b>readFloatBE()</b></code>, <code>bl.<b>readFloatLE()</b></code>, <code>bl.<b>readInt32BE()</b></code>, <code>bl.<b>readInt32LE()</b></code>, <code>bl.<b>readUInt32BE()</b></code>, <code>bl.<b>readUInt32LE()</b></code>, <code>bl.<b>readInt16BE()</b></code>, <code>bl.<b>readInt16LE()</b></code>, <code>bl.<b>readUInt16BE()</b></code>, <code>bl.<b>readUInt16LE()</b></code>, <code>bl.<b>readInt8()</b></code>, <code>bl.<b>readUInt8()</b></code></a> - * <a href="#streams">Streams</a> - --------------------------------------------------------- -<a name="ctor"></a> -### new BufferList([ callback | Buffer | Buffer array | BufferList | BufferList array | String ]) -The constructor takes an optional callback, if supplied, the callback will be called with an error argument followed by a reference to the **bl** instance, when `bl.end()` is called (i.e. from a piped stream). This is a convenient method of collecting the entire contents of a stream, particularly when the stream is *chunky*, such as a network stream. - -Normally, no arguments are required for the constructor, but you can initialise the list by passing in a single `Buffer` object or an array of `Buffer` object. - -`new` is not strictly required, if you don't instantiate a new object, it will be done automatically for you so you can create a new instance simply with: - -```js -var bl = require('bl') -var myinstance = bl() - -// equivilant to: - -var BufferList = require('bl') -var myinstance = new BufferList() -``` - --------------------------------------------------------- -<a name="length"></a> -### bl.length -Get the length of the list in bytes. This is the sum of the lengths of all of the buffers contained in the list, minus any initial offset for a semi-consumed buffer at the beginning. Should accurately represent the total number of bytes that can be read from the list. - --------------------------------------------------------- -<a name="append"></a> -### bl.append(Buffer | Buffer array | BufferList | BufferList array | String) -`append(buffer)` adds an additional buffer or BufferList to the internal list. `this` is returned so it can be chained. - --------------------------------------------------------- -<a name="get"></a> -### bl.get(index) -`get()` will return the byte at the specified index. - --------------------------------------------------------- -<a name="slice"></a> -### bl.slice([ start, [ end ] ]) -`slice()` returns a new `Buffer` object containing the bytes within the range specified. Both `start` and `end` are optional and will default to the beginning and end of the list respectively. - -If the requested range spans a single internal buffer then a slice of that buffer will be returned which shares the original memory range of that Buffer. If the range spans multiple buffers then copy operations will likely occur to give you a uniform Buffer. - --------------------------------------------------------- -<a name="copy"></a> -### bl.copy(dest, [ destStart, [ srcStart [, srcEnd ] ] ]) -`copy()` copies the content of the list in the `dest` buffer, starting from `destStart` and containing the bytes within the range specified with `srcStart` to `srcEnd`. `destStart`, `start` and `end` are optional and will default to the beginning of the `dest` buffer, and the beginning and end of the list respectively. - --------------------------------------------------------- -<a name="duplicate"></a> -### bl.duplicate() -`duplicate()` performs a **shallow-copy** of the list. The internal Buffers remains the same, so if you change the underlying Buffers, the change will be reflected in both the original and the duplicate. This method is needed if you want to call `consume()` or `pipe()` and still keep the original list.Example: - -```js -var bl = new BufferList() - -bl.append('hello') -bl.append(' world') -bl.append('\n') - -bl.duplicate().pipe(process.stdout, { end: false }) - -console.log(bl.toString()) -``` - --------------------------------------------------------- -<a name="consume"></a> -### bl.consume(bytes) -`consume()` will shift bytes *off the start of the list*. The number of bytes consumed don't need to line up with the sizes of the internal Buffers—initial offsets will be calculated accordingly in order to give you a consistent view of the data. - --------------------------------------------------------- -<a name="toString"></a> -### bl.toString([encoding, [ start, [ end ]]]) -`toString()` will return a string representation of the buffer. The optional `start` and `end` arguments are passed on to `slice()`, while the `encoding` is passed on to `toString()` of the resulting Buffer. See the [Buffer#toString()](http://nodejs.org/docs/latest/api/buffer.html#buffer_buf_tostring_encoding_start_end) documentation for more information. - --------------------------------------------------------- -<a name="readXX"></a> -### bl.readDoubleBE(), bl.readDoubleLE(), bl.readFloatBE(), bl.readFloatLE(), bl.readInt32BE(), bl.readInt32LE(), bl.readUInt32BE(), bl.readUInt32LE(), bl.readInt16BE(), bl.readInt16LE(), bl.readUInt16BE(), bl.readUInt16LE(), bl.readInt8(), bl.readUInt8() - -All of the standard byte-reading methods of the `Buffer` interface are implemented and will operate across internal Buffer boundaries transparently. - -See the <b><code>[Buffer](http://nodejs.org/docs/latest/api/buffer.html)</code></b> documentation for how these work. - --------------------------------------------------------- -<a name="streams"></a> -### Streams -**bl** is a Node **[Duplex Stream](http://nodejs.org/docs/latest/api/stream.html#stream_class_stream_duplex)**, so it can be read from and written to like a standard Node stream. You can also `pipe()` to and from a **bl** instance. - --------------------------------------------------------- - -## Contributors - -**bl** is brought to you by the following hackers: - - * [Rod Vagg](https://github.com/rvagg) - * [Matteo Collina](https://github.com/mcollina) - * [Jarett Cruger](https://github.com/jcrugzz) - -======= - -<a name="license"></a> -## License & copyright - -Copyright (c) 2013-2014 bl contributors (listed above). - -bl is licensed under the MIT license. All rights not explicitly granted in the MIT license are reserved. See the included LICENSE.md file for more details. diff --git a/node_modules/request/node_modules/bl/bl.js b/node_modules/request/node_modules/bl/bl.js deleted file mode 100644 index f585df172..000000000 --- a/node_modules/request/node_modules/bl/bl.js +++ /dev/null @@ -1,243 +0,0 @@ -var DuplexStream = require('readable-stream/duplex') - , util = require('util') - - -function BufferList (callback) { - if (!(this instanceof BufferList)) - return new BufferList(callback) - - this._bufs = [] - this.length = 0 - - if (typeof callback == 'function') { - this._callback = callback - - var piper = function piper (err) { - if (this._callback) { - this._callback(err) - this._callback = null - } - }.bind(this) - - this.on('pipe', function onPipe (src) { - src.on('error', piper) - }) - this.on('unpipe', function onUnpipe (src) { - src.removeListener('error', piper) - }) - } else { - this.append(callback) - } - - DuplexStream.call(this) -} - - -util.inherits(BufferList, DuplexStream) - - -BufferList.prototype._offset = function _offset (offset) { - var tot = 0, i = 0, _t - for (; i < this._bufs.length; i++) { - _t = tot + this._bufs[i].length - if (offset < _t) - return [ i, offset - tot ] - tot = _t - } -} - - -BufferList.prototype.append = function append (buf) { - var i = 0 - , newBuf - - if (Array.isArray(buf)) { - for (; i < buf.length; i++) - this.append(buf[i]) - } else if (buf instanceof BufferList) { - // unwrap argument into individual BufferLists - for (; i < buf._bufs.length; i++) - this.append(buf._bufs[i]) - } else if (buf != null) { - // coerce number arguments to strings, since Buffer(number) does - // uninitialized memory allocation - if (typeof buf == 'number') - buf = buf.toString() - - newBuf = Buffer.isBuffer(buf) ? buf : new Buffer(buf) - this._bufs.push(newBuf) - this.length += newBuf.length - } - - return this -} - - -BufferList.prototype._write = function _write (buf, encoding, callback) { - this.append(buf) - - if (typeof callback == 'function') - callback() -} - - -BufferList.prototype._read = function _read (size) { - if (!this.length) - return this.push(null) - - size = Math.min(size, this.length) - this.push(this.slice(0, size)) - this.consume(size) -} - - -BufferList.prototype.end = function end (chunk) { - DuplexStream.prototype.end.call(this, chunk) - - if (this._callback) { - this._callback(null, this.slice()) - this._callback = null - } -} - - -BufferList.prototype.get = function get (index) { - return this.slice(index, index + 1)[0] -} - - -BufferList.prototype.slice = function slice (start, end) { - return this.copy(null, 0, start, end) -} - - -BufferList.prototype.copy = function copy (dst, dstStart, srcStart, srcEnd) { - if (typeof srcStart != 'number' || srcStart < 0) - srcStart = 0 - if (typeof srcEnd != 'number' || srcEnd > this.length) - srcEnd = this.length - if (srcStart >= this.length) - return dst || new Buffer(0) - if (srcEnd <= 0) - return dst || new Buffer(0) - - var copy = !!dst - , off = this._offset(srcStart) - , len = srcEnd - srcStart - , bytes = len - , bufoff = (copy && dstStart) || 0 - , start = off[1] - , l - , i - - // copy/slice everything - if (srcStart === 0 && srcEnd == this.length) { - if (!copy) // slice, just return a full concat - return Buffer.concat(this._bufs) - - // copy, need to copy individual buffers - for (i = 0; i < this._bufs.length; i++) { - this._bufs[i].copy(dst, bufoff) - bufoff += this._bufs[i].length - } - - return dst - } - - // easy, cheap case where it's a subset of one of the buffers - if (bytes <= this._bufs[off[0]].length - start) { - return copy - ? this._bufs[off[0]].copy(dst, dstStart, start, start + bytes) - : this._bufs[off[0]].slice(start, start + bytes) - } - - if (!copy) // a slice, we need something to copy in to - dst = new Buffer(len) - - for (i = off[0]; i < this._bufs.length; i++) { - l = this._bufs[i].length - start - - if (bytes > l) { - this._bufs[i].copy(dst, bufoff, start) - } else { - this._bufs[i].copy(dst, bufoff, start, start + bytes) - break - } - - bufoff += l - bytes -= l - - if (start) - start = 0 - } - - return dst -} - -BufferList.prototype.toString = function toString (encoding, start, end) { - return this.slice(start, end).toString(encoding) -} - -BufferList.prototype.consume = function consume (bytes) { - while (this._bufs.length) { - if (bytes >= this._bufs[0].length) { - bytes -= this._bufs[0].length - this.length -= this._bufs[0].length - this._bufs.shift() - } else { - this._bufs[0] = this._bufs[0].slice(bytes) - this.length -= bytes - break - } - } - return this -} - - -BufferList.prototype.duplicate = function duplicate () { - var i = 0 - , copy = new BufferList() - - for (; i < this._bufs.length; i++) - copy.append(this._bufs[i]) - - return copy -} - - -BufferList.prototype.destroy = function destroy () { - this._bufs.length = 0 - this.length = 0 - this.push(null) -} - - -;(function () { - var methods = { - 'readDoubleBE' : 8 - , 'readDoubleLE' : 8 - , 'readFloatBE' : 4 - , 'readFloatLE' : 4 - , 'readInt32BE' : 4 - , 'readInt32LE' : 4 - , 'readUInt32BE' : 4 - , 'readUInt32LE' : 4 - , 'readInt16BE' : 2 - , 'readInt16LE' : 2 - , 'readUInt16BE' : 2 - , 'readUInt16LE' : 2 - , 'readInt8' : 1 - , 'readUInt8' : 1 - } - - for (var m in methods) { - (function (m) { - BufferList.prototype[m] = function (offset) { - return this.slice(offset, offset + methods[m])[m](0) - } - }(m)) - } -}()) - - -module.exports = BufferList diff --git a/node_modules/request/node_modules/bl/node_modules/readable-stream/.npmignore b/node_modules/request/node_modules/bl/node_modules/readable-stream/.npmignore deleted file mode 100644 index 38344f87a..000000000 --- a/node_modules/request/node_modules/bl/node_modules/readable-stream/.npmignore +++ /dev/null @@ -1,5 +0,0 @@ -build/ -test/ -examples/ -fs.js -zlib.js